Insufficient Logging And Monitoring

Welcome to Secumantra! In this post, we’re going to talk about the number ten vulnerability from OWASP Top Ten – Insufficient Logging And Monitoring.

OWASP (Open Web Application Security Project) is a nonprofit foundation that works to improve the security of software. OWASP Foundation is globally recognized by developers as the first step towards more secure coding. It releases OWASP Top Ten list every 2-3 years sharing the most critical security risks to modern web applications.

Insufficient Logging and Monitoring

Insufficient logging and monitoring is the last vulnerability as per OWASP Top Ten. Although it is not a vulnerability in itself, it is actually the lack of proper logging, monitoring, and alerting. This allows attacks and attackers go unnoticed.

As most of the applications and services being developed today are cloud first, it is very important to monitor and keep track of all critical events happening across your application. This becomes more important for web applications and micro-services in oppose to a traditional desktop application.

The category includes everything from unlogged events, logs that are not stored properly and warnings where no action is taken within reasonable time.

Detection

Insufficient Logging and Monitoring is really hard to detect from an outsider perspective. The logs should only be exposed internally, so whether or not logging and monitoring best practices are implemented is not something an outsider can determine.

Review the system architecture and make sure there are routines in place on how to handle the logs from every application and system. Many applications and systems already produce a lot of logs, but without proper routines, logging gives little value.

As per OWASP, insufficient logging, detection, monitoring and active response occurs any time:

- Auditable events, such as logins, failed logins, and high-value transactions are not logged.

- Warnings and errors generate no, inadequate, or unclear log messages.

- Logs of applications and APIs are not monitored for suspicious activity.

- Logs are only stored locally.

- Appropriate alerting thresholds and response escalation processes are not in place or effective.

- The application is unable to detect, escalate, or alert for active attacks in real time or near real time.

You are vulnerable to information leakage if you make logging and alerting events visible to a user or an attacker (see Sensitive Data Exposure).

Examples – Attack Scenarios

- Example-1: An open source project forum software run by a small team was hacked using a flaw in its software. The attackers managed to wipe out the internal source code repository containing the next version, and all of the forum contents. Although source could be recovered, the lack of monitoring, logging or alerting led to a far worse breach. The forum software project is no longer active as a result of this issue.

- Example-2: An attacker uses scans for users using a common password. They can take over all accounts using this password. For all other users, this scan leaves only one false login behind. After some days, this may be repeated with a different password.

- Example-3: A major US retailer reportedly had an internal malware analysis sandbox analyzing attachments. The sandbox software had detected potentially unwanted software, but no one responded to this detection. The sandbox had been producing warnings for some time before the breach was detected due to fraudulent card transactions by an external bank.

Prevention

As per the risk of the data stored or processed by the application, OWASP recommends the following preventive measures:

- Ensure all login, access control failures, and server-side input validation failures can be logged with sufficient user context to identify suspicious or malicious accounts, and held for sufficient time to allow delayed forensic analysis. This is valuable when investigating a hack afterwards.

- Ensure that logs are generated in a format that can be easily consumed by a centralized log management solutions. Make sure the logs are backed up and synced to another server.

- Ensure high-value transactions have an audit trail with integrity controls to prevent tampering or deletion, such as append-only database tables or similar.

- Establish effective monitoring and alerting such that suspicious activities are detected and responded to in a timely fashion.

- Establish or adopt an incident response and recovery plan.

Companies must increase their investments in security, both on the personnel side and in tools and technologies, in order to be able to identify security incidents efficiently and precisely and to react adequately. It takes far too long to detect complex threats. Once an attacker has gained access to a network, he has plenty of time to often cause irreparable damage.

The cost of an attack can be reduced by quickly identifying the threats. Monitoring is required so that the logs do not simply overflow unnoticed on the log server. Logging alone is therefore not enough, much more logs have to be processed and checked regularly. This enables unusual events and anomalies to be detected in good time.

There are commercial and open source application protection frameworks such as OWASP AppSensor, web application firewalls such as ModSecurity with the OWASP ModSecurity Core Rule Set, and log correlation software with custom dashboards and alerting.

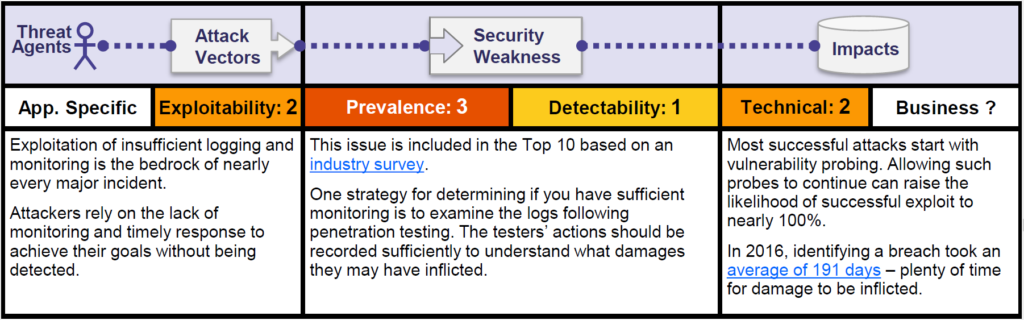

OWASP Risk Rating

Let us look at the OWASP risk rating matrix –

OWASP added this vulnerability as a part of the list based on a industry survey rather than quantifiable data. It is unclear how many systems are affected. The OWASP community has included “Insufficient Logging & Monitoring” in the OWASP Top 10 — even before risks such as cross-site request forgeries (CSRF) or open redirects. The latter has been omitted from the latest ranking of 2017.

Many criticized this decision because “logging and monitoring” is not a clear vulnerability like the other risks. Rather, it is a best practice guide to protect the application. OWASP justifies this by stating that logging and monitoring are the cornerstones of a modern and secure system.

In 2016, the average detection rate for an attack was 191 days. Had the breaches been detected earlier the impact could be drastically minimised.

When a security breach is not discovered in time, the attackers have time to escalate the attack further into the system. It also means they can use the stolen data for malicious purposes for a longer time.

With regard to this risk, OWASP classifies the chance for attacks based on this vulnerability into “medium”, prevalence “high” and detectability “low”.

Summary

Most breach studies show that the time to detect a breach is over 200 days, typically detected by external parties rather than internal processes or monitoring. Due to the insufficient logging and monitoring, compromises are sometimes not detected at all or detected too late. On an average, it takes up to seven months to be detect a security attack.

Insufficient logging and monitoring, coupled with missing or ineffective integration with incident response, allows attackers to further attack systems, maintain persistence, pivot to more systems, and tamper, extract, or destroy data.

When securing your applications against any critical vulnerability (specially from OWASP Top Ten), it is always a good practice to follow defense in depth strategy.

Thank you for reading. Stay Safe, Stay Secure!